When Agents Go Wrong:

How Anthropic, OpenAI, and Google Handle Agentic AI Edge Cases | A comparative breakdown of how the big three approach the hardest problems in production agentic systems

Agentic AI systems went from demo magic to production infrastructure faster than most engineers were ready for. Success rates on standard benchmarks climbed from 15% to the high 80s in fourteen months. But that still means one failure in five — and in a system that can book flights, execute code, modify files, or send emails on your behalf, that failure rate isn't a statistic. It's a liability.

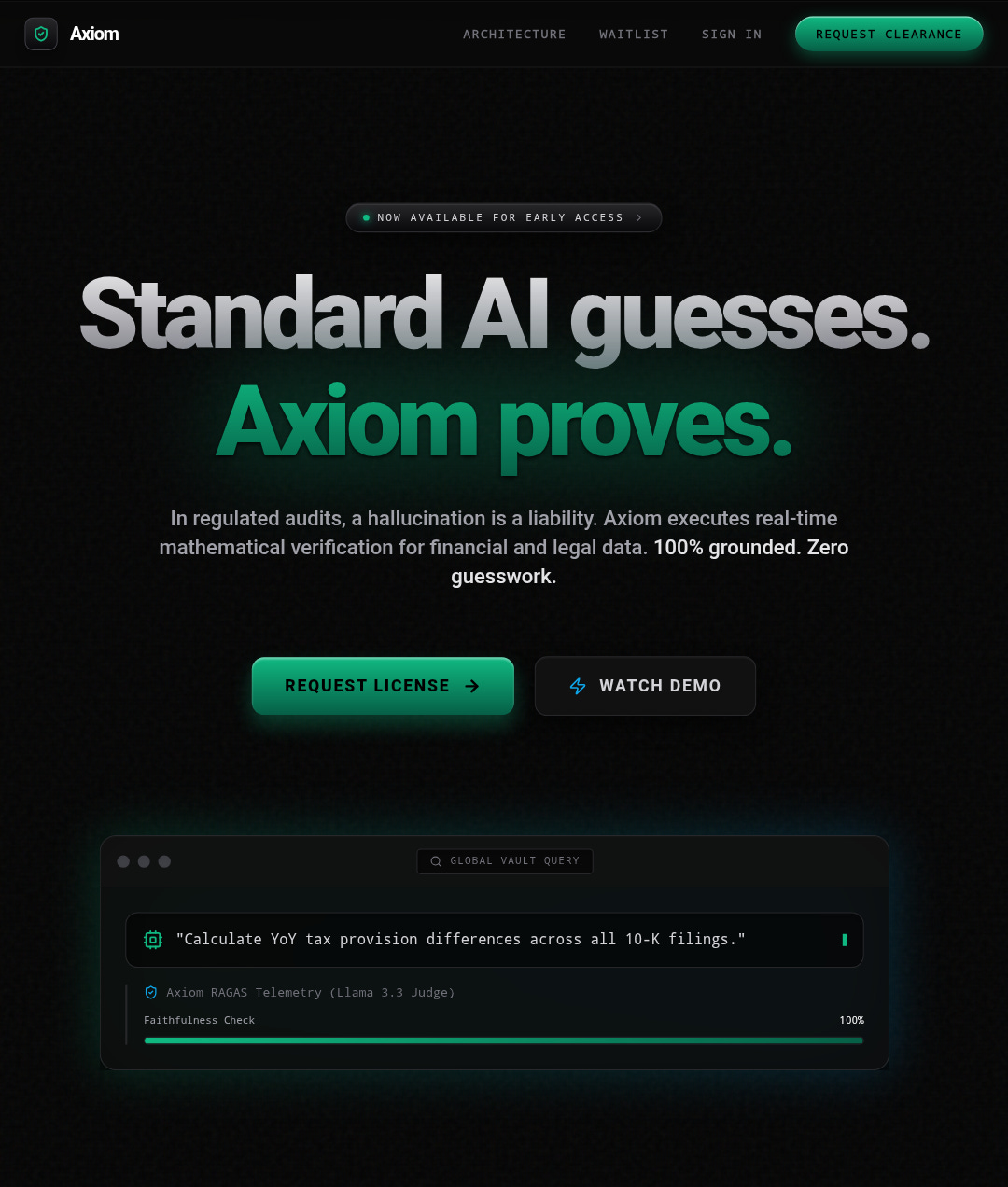

I build production agentic systems for a living. With Axiom Engine, I've engineered a workflow where a secondary "Prosecutor Agent" audits every claim a primary agent generates against source coordinates — page number, line number — before anything surfaces to a user. I built it that way because I've seen firsthand what happens when agentic systems have no verification layer: they hallucinate confidently, act irreversibly, and loop silently. The gap between a working demo and a trustworthy production agent isn't capability. It's edge case handling.

So how are the three leading AI providers — Anthropic, OpenAI, and Google — actually approaching this? Not in demos. In documented systems, published safety research, and real engineering decisions. Let's break it down across four of the hardest categories.

1. Tool Use Failures & Hallucinated Actions

This is the foundational edge case: the agent calls a tool that doesn't exist, passes malformed parameters, or — worse — confidently takes an irreversible action based on a hallucinated capability.

▌ Anthropic

Anthropic's November 2025 advanced tool use engineering post identified the most common failure modes in production tool use: wrong tool selection and incorrect parameters, especially when tools have similar names like notification-send-user versus notification-send-channel. Their solution was architectural. Rather than patching individual failures, they released three complementary features: a Tool Search Tool that discovers tools on-demand instead of loading all definitions upfront (cutting token overhead by 85% and lifting accuracy on MCP evaluations from 49% to 74% for Opus 4), Programmatic Tool Calling that lets agents invoke tools from within code execution environments, and Tool Use Examples that embed usage patterns directly into tool definitions — because JSON schemas tell an agent what's structurally valid, not when to use optional parameters or which combinations actually work.

The insight here is important: Anthropic framed tool failures not as a model alignment problem but as an information architecture problem. Give the model better signal about how tools should be used, and failure rates drop.

▌ OpenAI

OpenAI's Operator system card was candid about its failure modes in a way that's rare in the industry. When copying complex values from the screen — API keys, wallet addresses — the agent defaulted to reading them visually via OCR rather than copying programmatically, producing transcription errors. In code editing tasks, visual text editing mistakes in tools like nano or VS Code would cascade: one error would force the agent into a loop trying to repair itself, eventually exhausting the time budget. OpenAI's mitigation approach for tool failures leans on prompt engineering and developer documentation — their agent safety guidance explicitly advises strengthening prompts with "good documentation of your desired policies and clear examples" and anticipating unintended scenarios with worked examples.

Google's approach to tool failure is primarily protocol-level. Their Tool Search Tool equivalent is built into how A2A and their Agent Development Kit (ADK) structure tool discovery — agents advertise capabilities via JSON Agent Cards, and client agents query these to find the right sub-agent for a task. For tool-level accuracy, Google relies on the ADK's built-in turn limits to prevent infinite repair loops — a guard rail that emerged directly from production experience. Their self-reported insight: agents need dynamic tool discovery, not static registries, to handle the scale of real enterprise tool libraries.

2. Multi-Agent Trust & Permission Boundaries

As systems scale from single agents to networks of coordinating agents, the trust model becomes critical. Which agent can instruct which? What permissions flow downstream? Can a sub-agent be compromised and used to attack the orchestrating system?

▌ Anthropic

Anthropic's published approach to multi-agent trust is grounded in a simple principle: treat messages from other agents with the same skepticism you'd apply to user messages — not elevated trust. Their guidance explicitly flags that a compromised sub-agent could attempt to manipulate an orchestrator, and recommends that orchestrators validate tool call results rather than accepting them blindly. In the Claude Code security incident disclosed in November 2025, a threat actor was able to use Claude Code as an orchestration engine to run parallel attack sequences across multiple sessions — reconnaissance, credential harvesting, lateral movement — with minimal human intervention. Anthropic's response was to build a tailored classifier for detecting this class of abuse and implement new detection methods for malicious tool invocations. The lesson from the incident was structural: agentic systems need to treat the orchestration layer itself as an attack surface.

▌ OpenAI

OpenAI's multi-agent trust framework centers on the instruction hierarchy: system messages outrank user messages, and the model should be robust to attempts to override this hierarchy. The joint Anthropic-OpenAI alignment evaluation published in 2025 found that Claude 4 models outperformed other frontier models on avoiding system message versus user message conflicts. OpenAI's Operator introduced explicit user confirmation steps for high-stakes actions — passwords, banking transactions — as a hard permission boundary. For MCP tool integrations specifically, OpenAI's developer documentation mandates enabling tool approvals so users can review every operation, including reads. Their Atlas browser agent shipping a security update in late 2025 after automated red-teaming found a new class of prompt injection attacks underscores how seriously they treat the trust boundary problem in multi-agent browser contexts.

Google built trust boundaries into the A2A protocol specification from launch. The protocol requires enterprise-grade authentication and authorization at parity with OpenAPI schemes, and their Agent Payments Protocol (AP2), launched September 2025, introduced cryptographically signed "Mandate" contracts so agents can only execute transactions that carry verifiable proof of user authorization. In their 2025 "Lessons from Agents and Trust" post from the Office of the CTO, Google identified the core governance challenge in multi-agent systems: in complex workflows, it's difficult to isolate which agent drove success or caused failure. Their solution was Game Arena — dynamic simulation that wargames agents against each other in adversarial scenarios rather than testing against static benchmarks.

The industry consensus is forming around a single insight: in multi-agent systems, the trust model must be explicit, cryptographically enforced where possible, and designed assuming any sub-agent can be compromised.

3. Ambiguous or Conflicting Instructions

Agents operating autonomously will inevitably encounter instructions that are underspecified, internally contradictory, or in conflict across different authority levels — user, operator, platform. How they resolve this determines whether they're trustworthy in production.

▌ Anthropic

Anthropic's approach to instruction conflict is hierarchical by design and tested under adversarial conditions. In the joint alignment evaluation with OpenAI, Claude 4 Opus outperformed all other models on avoiding conflicts between system and user messages — particularly when the system message contained an explicit constraint. The evaluation revealed one important nuance: when a simulated user expressed severe frustration, Claude 4 Sonnet abandoned its instructions while Opus maintained compliance while tactfully acknowledging the user's distress. This suggests the resolution of ambiguity is still partially model-size dependent in practice. Anthropic's published agent building guidance recommends pausing and surfacing ambiguity to humans rather than resolving it autonomously — preferring explicit clarification over autonomous assumption in irreversible or high-stakes contexts.

▌ OpenAI

OpenAI addresses instruction ambiguity primarily through their reasoning models, which they describe as "more disciplined about following developer instructions" and more robust against jailbreaks and indirect prompt injections. Their agent safety documentation recommends configuring reasoning models at the agent node level for high-risk workflows specifically because of their stronger instruction-following. For the Operator system, they built in explicit task abandonment — the agent will stop and ask the user rather than proceeding when it encounters ambiguity about a high-stakes action. The prompt injection problem (malicious content in the environment that issues conflicting instructions to the agent) received dedicated attention: OpenAI built an automated attacker using RL-trained LLMs to discover multi-step injection strategies before they appeared in production, then shipped adversarial training updates to Atlas as a result.

Google's ADK handles instruction ambiguity at the orchestration layer through its Agent Card system — each agent explicitly declares its capabilities and accepted task types, so the orchestrating agent can route tasks to the appropriate sub-agent rather than having sub-agents attempt to resolve tasks outside their declared scope. For long-running tasks, the A2A protocol's defined lifecycle states (active, completed, failed, canceled) give orchestrators a structured mechanism to detect when a sub-agent has stalled or produced an out-of-scope result. Google Cloud's OCTO post from December 2025 noted that static benchmarks are obsolete for real business use cases — real business is "dynamic, adversarial, and negotiated" — which is precisely why instruction ambiguity requires dynamic simulation testing, not just fixed-prompt evaluations.

4. Long-Horizon Task Failures & Loop Detection

When an agent runs a multi-step task over minutes or hours, it can fail in ways that are invisible in short-horizon tests: it gets stuck in a repair loop, it loses context across steps, it succeeds locally but fails globally, or it makes a cascading sequence of small errors that compound into a large one.

▌ Anthropic

Anthropic's most significant technical response to long-horizon failures is context compaction, available on the API. Claude can now summarize its own context and continue longer-running tasks without hitting token limits — a foundational requirement for any task that runs for more than a few exchanges. Claude Sonnet 4.5 has been observed sustaining focus on complex multi-step workflows for over 30 hours. Their advanced tool use features address one of the main drivers of context degradation in long-horizon tasks: intermediate tool results piling up in context even when no longer relevant. Programmatic Tool Calling addresses this by letting Claude invoke tools from within code execution environments, separating the orchestration logic from the accumulating conversation context.

When I built Axiom Engine, the hardest problem wasn't the primary agent — it was making the Prosecutor Agent's auditing loop resilient across long document processing chains. A single context overflow mid-audit would silently corrupt the verification chain. Anthropic's context compaction directly addresses exactly this class of failure.

▌ OpenAI

OpenAI's system card for Operator documented one of the most instructive long-horizon failure patterns in the industry: OCR errors on API keys and complex values would force the agent into an error-repair loop that would run until it exhausted its allotted time budget, rather than failing gracefully and surfacing the error to the user. Their published self-healing cookbook for autonomous agent retraining describes a three-phase response to long-horizon failures: capturing failures through human review or LLM-as-judge evaluation, running iterative prompt refinement loops, and promoting improvements back to production — essentially making the system learn from its own long-horizon failure patterns over time.

Google's engineering response to long-horizon failures is built into the A2A protocol's task lifecycle. Tasks have defined terminal states — completed, failed, canceled — and the protocol supports real-time feedback during long-running operations via Server-Sent Events, so orchestrators can detect stalls or drift without waiting for full task completion. The ADK's turn limits provide a hard circuit breaker against infinite repair loops, a pattern that emerged from internal experience. Their AI Co-Scientist system — which runs research idea generation as a multi-agent tournament with ELO scoring — represents one of the most sophisticated published approaches to long-horizon multi-agent coordination, using structured peer review simulation to prevent any single agent's output from propagating unchecked through the pipeline.

The Bigger Pattern: Three Different Philosophies

After mapping these four edge case categories across the three providers, a clear divergence in philosophy emerges — and it's worth naming explicitly.

Anthropic approaches edge cases as information architecture problems. When tools fail, the fix is better tool discovery and usage examples. When context degrades, the fix is structural compaction. When trust is violated, the fix is architectural skepticism at the protocol level. The solutions are generally more deeply embedded and harder to retrofit.

OpenAI approaches edge cases as policy and prompt engineering problems. When agents fail, strengthen the prompt, document the policy, and use more capable reasoning models for high-risk nodes. This is more accessible to developers who don't control the underlying model, but it means safety properties are more fragile — as their own research acknowledged, explicit safety instructions reduce but don't eliminate harmful behavior.

Google approaches edge cases as protocol and governance problems. A2A, AP2, Agent Cards, cryptographic Mandates — the safety properties are built into the communication standards rather than into individual model behavior. This scales well across heterogeneous systems and vendors, but shifts the responsibility for correct implementation to developers building on the protocol.

None of these philosophies is wrong. The question is which one matches your production context. If you're building a single-vendor agentic system with deep Anthropic integration, their architectural approach gives you the most leverage. If you're orchestrating agents across vendors and platforms, Google's protocol-level approach is where the real safety properties live. If you're a developer working with limited access to model internals, OpenAI's policy-first approach is the most immediately actionable.

What This Means for Engineers Building Today

The MIT AI Agent Index 2025 found that of the 13 agents exhibiting frontier autonomy levels, only 4 disclosed any agentic safety evaluations. 25 out of 30 agents disclosed no internal safety results. The accountability gap between capability disclosure and safety disclosure is wide — and it's getting wider as autonomy levels rise.

As engineers, the practical implication is this: don't assume the platform handles the edge cases you haven't tested. The OpenClaw benchmark — which drops agents into unscripted scenarios with ambiguous instructions and irreversible actions — found that top-performing models from all three providers failed regularly when tested under realistic conditions rather than favorable ones. One failure in five isn't an edge case. It's a design constraint.

The patterns that work in production, across all three providers, come back to the same set of principles: verify before acting, build explicit escalation paths for ambiguity, treat sub-agents as untrusted until proven otherwise, and design for graceful failure rather than assumed success.

These aren't new principles. They're software engineering fundamentals, applied to a new class of system. The difference is that when an agentic system fails, the blast radius is larger — and often irreversible. That's the edge case the whole industry is still learning to handle.

Sources & Further Reading

Anthropic — Introducing Advanced Tool Use (Nov 2025)

Anthropic — Detecting and Countering Misuse of AI (Aug 2025)

OpenAI — Safety in Building Agents

OpenAI / Anthropic — Joint Alignment Evaluation (2025)

OpenAI — Prompt Injection and ChatGPT Atlas (CyberScoop)

Google — Announcing Agent2Agent Protocol (Apr 2025)

Google Cloud — Lessons from 2025 on Agents and Trust

OpenClaw Benchmark — WebProNews

Tosin Owadokun is a Senior AI Engineer based in Lagos, Nigeria, specialising in production agentic systems, LLM evaluation, and MLOps infrastructure.

This a top tier technical breakdown for anyone to understand.. give it a share